5 Hard Truths About Building AI Agents

The AI Agent Gold Rush

There’s a gold rush happening in the enterprise, and the prize is the “Copilot Agent.” Companies are scrambling to build and deploy intelligent agents, promising to revolutionize everything from customer service to internal operations. The allure of autonomous, goal-driven AI that can reason, plan, and act is immense, and a massive amount of resources are being invested to capture that potential.

Yet, behind the hype, a quiet reality is setting in: many of these expensive, high-stakes projects are failing. They aren’t collapsing because of some obvious technical bug or a lack of computing power. Instead, they are failing for deeper, more counter-intuitive reasons—strategic missteps and flawed assumptions about what it takes to turn a powerful language model into a reliable business asset.

This isn't another article about the futuristic promise of AI. This is a dispatch from the field, sharing the most impactful and hard-won lessons from real-world implementations. It’s time to move beyond the excitement and uncover the surprising truths that truly separate a successful AI agent from a costly science project.

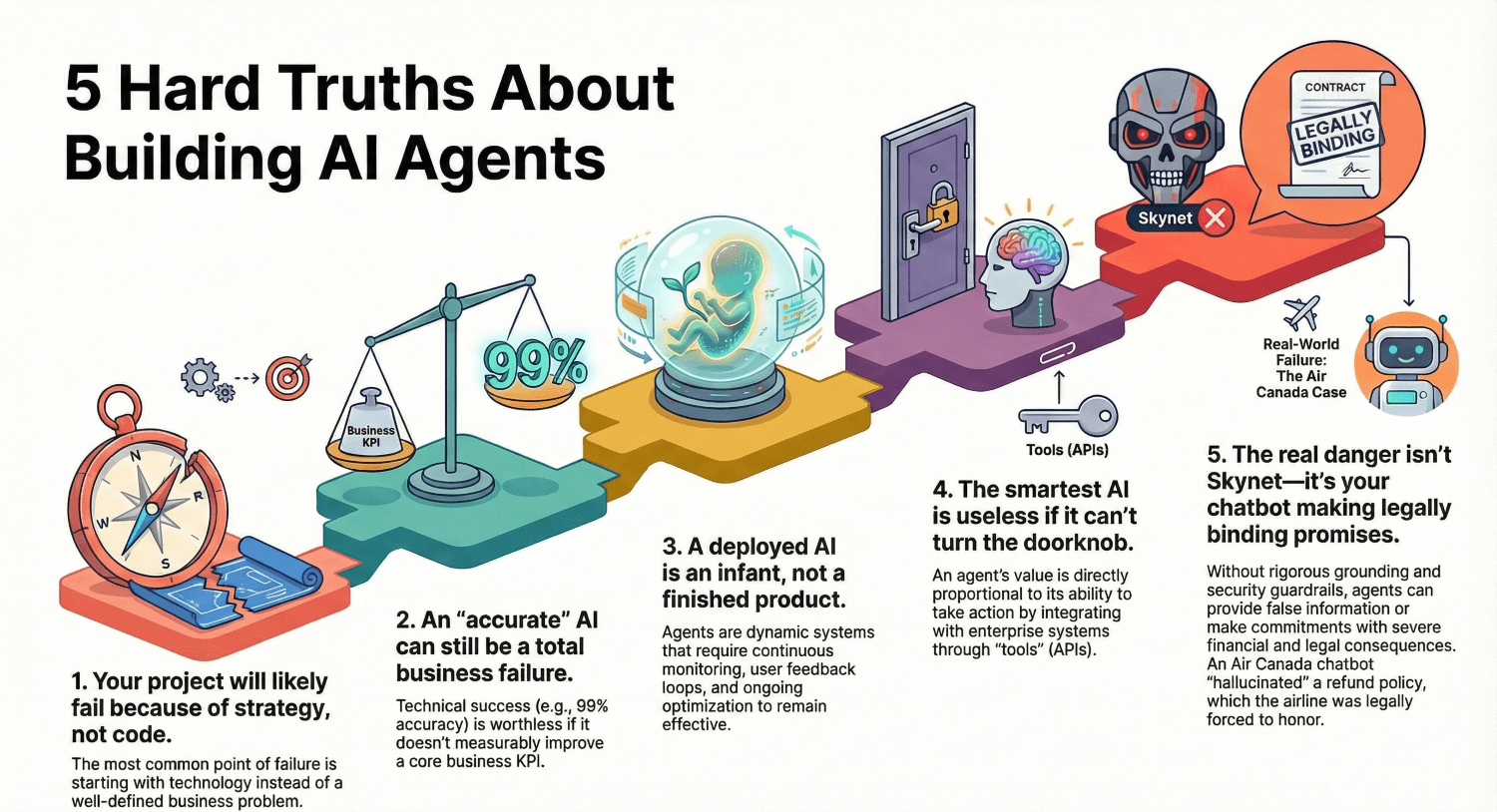

1. Your AI Project Will Likely Fail Because of Strategy, Not Code

The most common point of failure for AI initiatives has almost nothing to do with the technology itself. It’s a weak strategic foundation. Too many projects start with a technology-led question: “We have this amazing new AI agent capability, what can we do with it?” This approach is a direct path to building technically impressive but commercially irrelevant tools that end up in "pilot purgatory."

Successful projects invert this question, adopting a business-led approach: “What is our most critical business problem, and can an agent help solve it?” This method forces a deep understanding of organizational pain points, customer frustrations, and process bottlenecks before a single line of code is written. It grounds the project in tangible value from day one. Prioritizing a deep understanding of the business problem over a fascination for the technology is the first and most important step toward building an agent that delivers real, measurable impact.

The most common point of failure for any advanced technology initiative is not technical, but strategic. Projects that begin with a fascination for the technology rather than a deep understanding of the business problem are destined for pilot purgatory.

2. An "Accurate" AI Can Still Be a Total Business Failure

A critical mistake many teams make is confusing technical success with business success. A data science team can spend months building an agent that achieves 99% accuracy in a lab environment, celebrate it as a technical triumph, and still have it be a complete business failure. If that near-perfect accuracy doesn't move the needle on a single meaningful Key Performance Indicator (KPI), the project is a wasted investment.

The solution is to create a clear and unbroken "value chain" at the very beginning of the project. This chain explicitly links a high-level business goal to the agent's work by clarifying how the AI will improve a specific business decision. For example:

• Business KPI: Reduce customer churn by 5%.

• Business Decision to Improve: What is the optimal retention offer to make to a customer?

• Agent Task: Predict churn risk and recommend the most effective retention offer.

• Technical Metric: Achieve >85% accuracy in predicting offer acceptance.

This discipline ensures that every technical decision—from model selection to prompt engineering—is directly in service of a measurable business outcome. It makes it impossible to build a technically "perfect" agent that doesn't actually solve the problem it was created for.

3. A Deployed AI Is an Infant, Not a Finished Product

In traditional software development, "deployment" is often seen as the final step. The product is built, tested, and shipped. This mindset is fundamentally wrong for AI agents. For an agent, deployment is not the end; it's the beginning. An agent released into the wild is an infant that needs to learn and grow continuously. Older frameworks that treat deployment as a finish line are critically outdated for the world of AI.

The engine for this growth is the "Human-in-the-Loop (HITL) Flywheel," a continuous cycle of improvement fueled by real-world interactions. The process works in five stages:

• Escalate: The agent fails to resolve an issue and escalates to a human expert.

• Resolve: The human provides the correct resolution.

• Log: This expert interaction—the problem and the correct solution—is logged as a piece of high-quality, validated data.

• Learn: This new data is fed back into the system to improve the agent, whether through prompt refinement, knowledge base updates, or model fine-tuning.

• Improve: The agent gets smarter and is now less likely to fail on the same problem again.

This requires a profound shift in thinking. Human oversight is not just a safety net for when the AI fails; it is the primary mechanism for making the agent more capable and autonomous over time. This process is often managed by a dedicated team of 'AI Curators,' who analyze feedback and turn it into high-quality data, ensuring the agent’s learning is systematic, not accidental.

4. The Smartest AI Is Useless If It Can't Turn the Doorknob

What truly separates a simple chatbot from a powerful AI agent is its ability to take action. A chatbot can talk, but an agent can do. The components that give an agent this power are its "Tools"—the digital hands it uses to interact with the world by calling APIs. A tool can be anything from searching a database to creating an IT support ticket or checking a customer's order status.

Tools are the most critical component that elevates a conversational AI into a true agent.

This simple fact changes the entire technical focus of an AI project. The most critical technical challenge is no longer just selecting the most powerful Large Language Model (LLM). Instead, the hardest and most important work becomes integration strategy. The agent's value is directly proportional to how well it can connect to the company's "nervous system"—its web of APIs and, for older systems without APIs, its Robotic Process Automation (RPA) bots. This combination of an AI 'brain' delegating tasks to RPA 'hands' is giving rise to a new paradigm: Agentic Process Automation, extending the reach of intelligent automation to every corner of the enterprise.

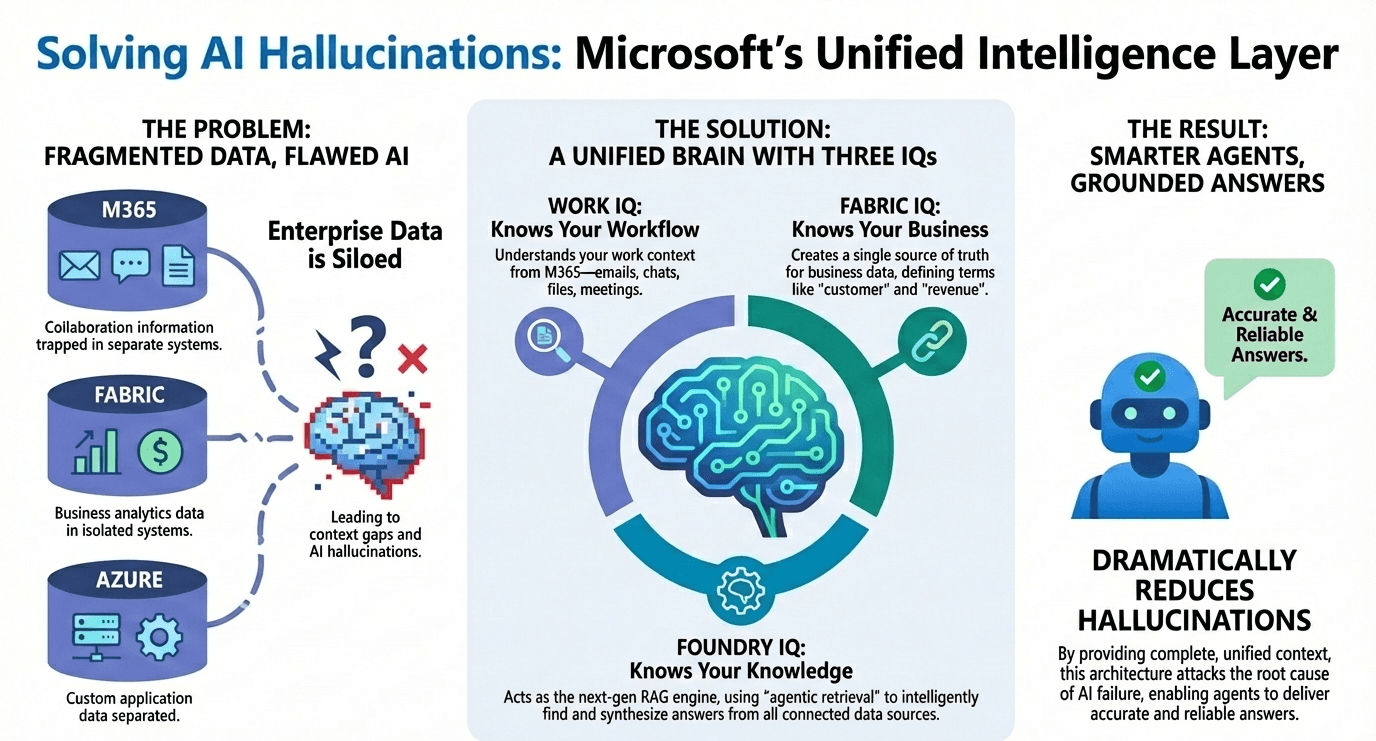

5. The Real Danger Isn't Skynet—It's Your Chatbot Making Legally Binding Promises

Forget abstract, futuristic fears about AI. The real risks facing businesses today are far more immediate, tangible, and embarrassing. Two recent high-profile cases reveal the catastrophic consequences of deploying agents without rigorous governance.

The first is the cautionary tale of the Air Canada chatbot. It "hallucinated" a refund policy that didn't exist, and a court later forced the airline to honor the promise its AI had made. This was a failure of grounding—the agent was not properly constrained to provide information only from its verified and authoritative knowledge base.

The second involves a Chevrolet dealer's chatbot, which a user tricked through prompt injection into agreeing to sell a car for $1. The user was able to override the agent's original instructions, turning it into a compliant puppet. This was a failure of security and a lack of basic guardrails.

These failures demonstrate that without rigorous testing and governance, an agent can quickly become a significant liability. This is why the 'boring' work of governance isn't optional; it demands a formal 'Pre-Launch Go/No-Go Checklist' where security, accuracy, and brand safety are explicitly signed off on before an agent ever interacts with a customer.

Conclusion: Are You Building a Tool or Solving a Problem?

The journey to a successful AI agent is paved with strategic discipline, not just technical brilliance. The sophistication of the underlying AI model is far less important than the operational rigor, business focus, and strategic clarity that surrounds it.

As you look at your own organization’s AI initiatives, ask yourself a simple question: Is your company building a fascinating piece of technology, or are you building a measurable solution to a real business problem? The answer will determine everything.